Concept by José Pereira Leal. Illustration generated with DALL·E.

“The purpose of a thought-experiment … is not to predict the future … but to describe reality, the present world.” – Ursula K. Le Guin

A few weeks ago I watched a video of a humanoid robot learning to fight. A Chinese-made bipedal machine, trained by reinforcement learning, discovering how to destabilise another bipedal opponent. The researchers were proud (rightly so) and the comments were enthusiastic. But I found myself, besides being horrified by the simple concept of teaching machines how to fight, unable to move past a single question: who, in that room, had spent serious time imagining what comes next?

And when I think about “what comes next” I am not just thinking of the business opportunities, the impact on Chinese leadership in workforce replacement through robotics to face the incoming demographic crisis, or dystopian futures dominated by robotic armies that films like “Terminator” imagined. This is all important, obviously. I am thinking about what comes next to the specific, granular, human person. What does a security guard in Beijing, Riyadh, Lisbon or New York do on a Tuesday morning, five years from now, when the thing that replaced him can also restrain him if he objects?

Around the same time, I read yet another confident prediction from a prominent technologist about AI eliminating millions of jobs. There is a new one almost every other day. The number was large, the tone was somewhere between regret and inevitability, and the analysis stopped precisely where I think it should have started. We were told what would happen at the level of labour markets, but we were told nothing about what it means to organise a day, build an identity, or how to explain to a child why she should do her homework.

I am not naive about disruption. I have spent thirty years in genomics and diagnostics, working to change what is possible in medicine, knowing that changing what is possible always changes something else too. I am not a modern-day Luddite with a pitchfork wishing to burn away inevitable change and disruption. But I see little imagination in the discussions of possible or probable futures. Disciplined, concrete, granular imagination. And I think we have largely stopped doing it.

We have not always been this way. There is a tradition, serious and hard-won, of scientists stopping mid-advance to ask whether they should continue. In 1974, a group of molecular biologists did something almost unthinkable by today’s standards: they called a voluntary moratorium on their own research. Recombinant DNA technology had just become possible, and rather than racing ahead, Paul Berg and his colleagues paused, convened at Asilomar, and attempted to think before they acted. I have had the privilege, over the years, of meeting people who were in those conversations, a thread I followed in an earlier piece, Salty Rivers into the desert. What strikes me now is not the specific biosafety questions they were addressing, but that they took seriously the obligation to imagine.

The questions we face today are not hypothetical either. We can already select embryos for genetic characteristics. We are moving, faster than most people realise, towards a moment where a parent could plausibly begin to ask: the sex of my child? And, as the science of complex trait selection advances, their height? Their skin colour? These are not yet routine engineering decisions, but they are no longer pure thought experiments either. They are on a trajectory that scientists and clinicians are actively shaping. Those closest to these capabilities have, I would argue, an obligation not merely to regulate but to imagine: to write the scene, however uncomfortable, of what they are enabling.

I am privileged to participate in several discussion forums where these questions come up regularly. The people involved are all experienced, serious, thoughtful, genuinely concerned and coming from many different subject areas and walks of life. The conversations are often good and insightful. And yet, when I read back through the transcripts, I notice something missing every time. The analysis is always aggregate, societal, statistical. Millions of jobs. Percentage of GDP. Demographic cohorts. Trust indices.

Never: what do I do this morning? How do I organise my day? Me, a specific person, in a specific city, with a specific set of skills that may or may not still be needed. What does my Tuesday look like in 2029? Or the mundane: how will we wash our faces in the morning, what will we eat for breakfast, or even how will we decide what to wear. The movie Wall-E is a good example of this exercise, imagining in a not-serious way what would happen to a society where over consumption made the planet hard to live at, and the people too fat to move without some sort of technological assistance, imagining robots falling in love. It imagined, made us imagine, and sparked conversations. Such profound challenges in such a funny movie!

That gap is not accidental. Aggregate thinking is comfortable because it keeps the human at a distance. The individual, particular, named, embedded in a family and a neighbourhood and a set of habits, is where the ethical weight and consequences are actually felt. And we consistently avoid going there. But fiction goes there. That is its specific superpower.

I write fiction under a pen name. My first published novel, A Madness of the Moon, is not a story about world-changing events. It is about the collision between scientific thinking and a family’s mythology, a story about ordinary people, in ordinary circumstances, navigating an ordinary conflict that turns out not to be ordinary at all once you are inside it. I did not write it to make an argument, but because I needed to think at that scale. In fact the story started with an image and a question: “why is she doing that?”

My current fiction project, The Custodian of Murjan, goes somewhere more directly connected to the work I do professionally. It follows the construction of a technology-enabled project inside a conservative society, and what that project does to the specific people involved, the main character, and those who interact with what he is building. Not to the society, mind you, but to the people involved, to their lives, beliefs, relationships.

Writing it has forced me to do things I would not otherwise have done. I have read seriously about spirituality and technology, the tensions between them, the ways they are sometimes partners and sometimes adversaries. I have tried to understand, at a depth that policy briefings do not require, the geography and the culture where I am myself working. And I have, in ways I did not anticipate, been made to reflect on my own spiritual life, which has been, during a time of intense personal losses, more sustaining than I expected.

None of that was the plan, but it was what the writing demanded. And this is something I love about writing fiction. The characters take a life of their own, they demand to do things different than you wanted them to, to go places you did not imagine, and in doing so, force you into unknown territories where those disruptions or technologies forced you to.

Because I was thinking on the topic of spirituality in our AI-assisted future, I found myself rereading Clifford D. Simak’s Project Pope, a novel I had loved as a teenager and half-forgotten. Simak imagines a community of robots, on a distant planet, building Vatican-17: an infallible computational authority designed to gather all knowledge and deduce a True Faith. It is strange and funny and quietly devastating. And it asks, decades before the current conversation, exactly the right questions about what happens when systems designed to mediate knowledge begin to mediate meaning. I did not go looking for it to write this article. The writing sent me there. That is the point.

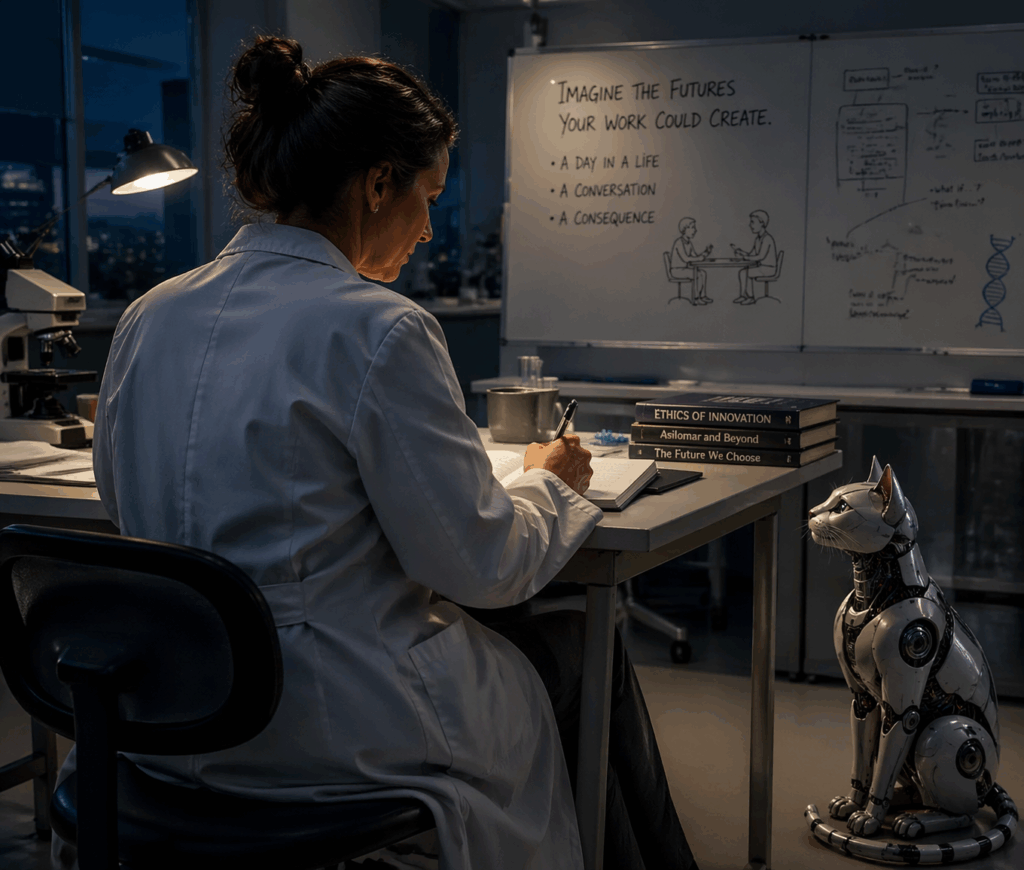

So here is the invitation, offered peer-to-peer and without drama: imagine the futures your work could create. Not at the level of the labour market, or some cosmic superhero, or some self-proclaimed best-of-the-best-of-the-best nation. But at the level of the person, real or fictional, who will live inside them.

Write it down. It does not need to be a novel. It does not need to be good. A scene. A day in a life. A conversation between two people whose circumstances your work has altered. The exercise is the point, not the product.

Not everything needs to be published. The ethical work happens the moment you sit down to write, before anyone else reads a word. It’s about you writing, you as the scientist, the innovator, the disruptor, imagining where it could go, whatever it is you are researching, building or dreaming about.

I should say something about the tools, because they are present in this conversation whether we name them or not. Generative AI has removed many of the practical barriers that technically trained people used to cite for not writing. Structure, continuity, getting words onto the page are so much easier now. Use it at will. I use AI assistance in my own work as a useful prosthesis, the same way I wear glasses to actually read the words on the screen or stick post-its on the wall to visualise plotlines. A prosthesis amplifies what is already there, and what I see being amplified, in LinkedIn feeds and Kindle catalogues and content pipelines, is volume without conscience. Soulless, apparently competent, but bringing nothing new to the table. I am reading posts and novels where nothing is imagined anymore. But let’s not blame the tools. They are not producing this failure. The absence of the internal exercise, of imagining, of reflecting, did. So please use the tools. They are genuinely remarkable. But do not outsource the imagination.

A note on prior art. The scientist in me cannot close this piece without acknowledging that this argument is not new, and that others have made it with more rigour, more force, and in some cases at greater personal cost than I have here. The Russell-Einstein Manifesto (1955) called on scientists to think beyond their disciplines to the consequences for humanity. The Asilomar process demonstrated what it looks like to act on that obligation mid-advance. Scholars including Robert Frodeman, Sheila Jasanoff, and more recently those working in the tradition of responsible research and innovation have built substantial frameworks around these questions. Brian Stableford, Ursula Le Guin, and Kim Stanley Robinson, among others, have argued, each in their own way, that speculative fiction is not an ornament to serious thinking but a method of it. I stand on their shoulders, writing personally about something they analysed carefully. Read them.

And write that story.